Send Data from Salesforce to Data Cloud using Ingestion API and Flow : Meera R Nair

by: Meera R Nair

blow post content copied from Meera R Nair - Salesforce Insights

click here to view original post

As part of this blog post, we are going to see a Sprint 24 feature - Send Data to Data Cloud using Flows and Ingestion API. The release note is available here.

Introduction

As we all know, Data Cloud helps us to build a unique view of customers by harmonizing data from multiple source systems and coming up with meaningful data segmentation and insights that can be used in different platforms for additional processing.

If you are new to Data Cloud refer to Salesforce documentation or these videos that are part of Salesforce Developers youtube channel.

What is Ingestion API

As per Salesforce documentation, Ingestion API is a REST API and offers two interaction patterns: bulk and streaming. The streaming pattern accepts incremental updates to a dataset as those changes are captured, while the bulk pattern accepts CSV files in cases where data syncs occur periodically. The same data stream can accept data from the streaming and the bulk interaction.

This Ingestion API will be helpful to send interaction-based data to Data Cloud in near real-time and

this is getting processed every 15 minutes.

Use Case

When external users are submitting donations from a public site, the donation details need to be sent to Data Cloud for segmentation and creating insights.

Implementation Details

1. Create a Donation custom Object

- Name

- Category

- Donation Amount

2. Create a screen flow to input donation details

3. Add this flow component to a Public site/ Salesforce page

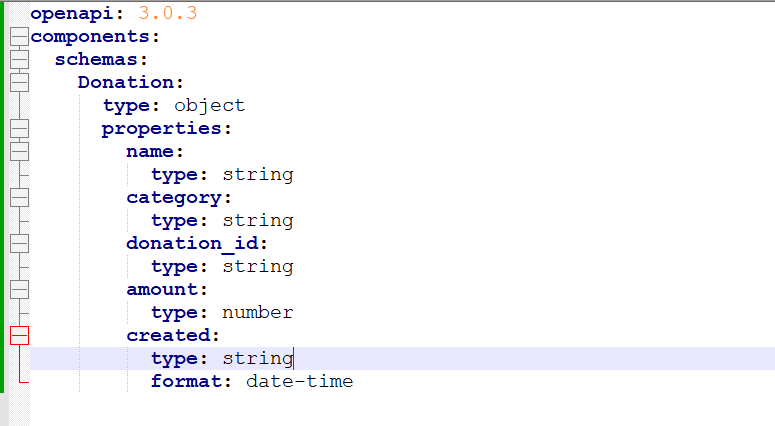

4. Create Ingestion API Connector and upload the Schema

- Click on New Button from Data Cloud setup->Ingestion API Connector

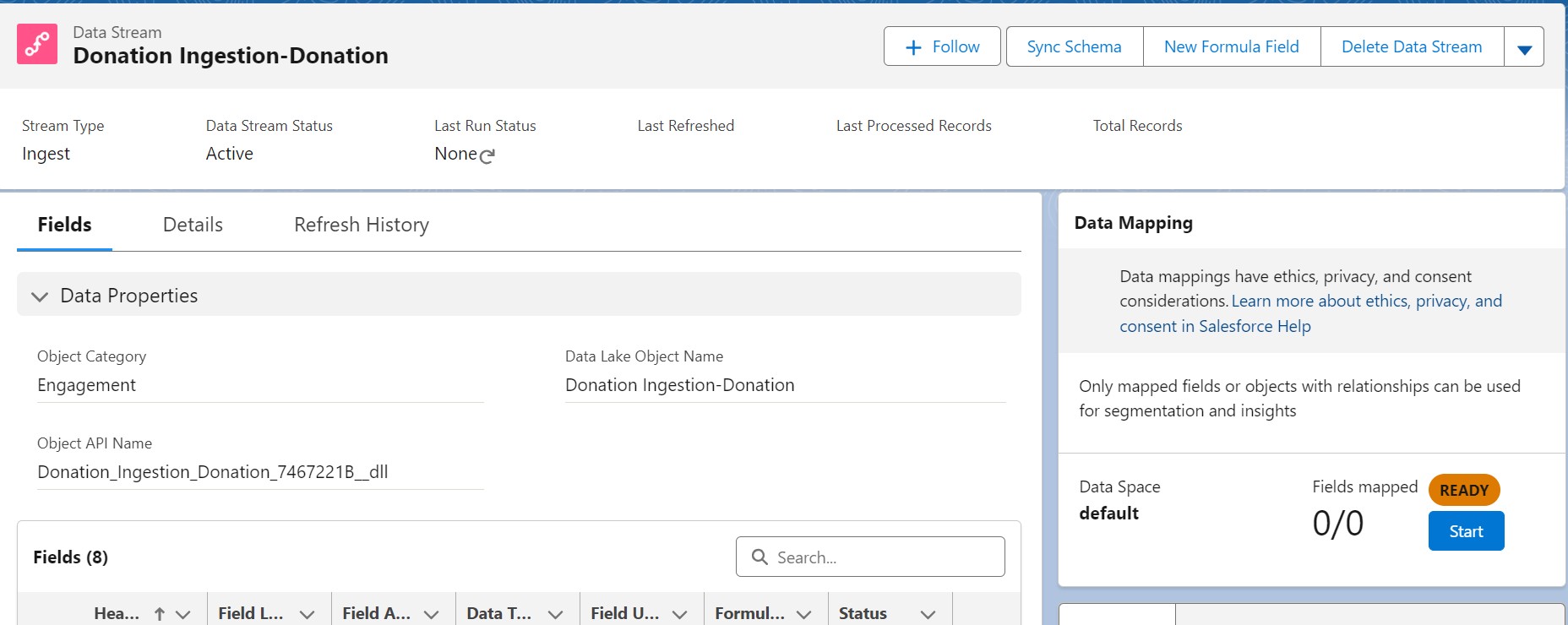

5. Create Data Stream from Ingestion API

- Create new Data Stream

- Select the source for Data Stream. In our case it is Ingestion API

- Select the newly created Donation Ingestion API

- Configure the selected object and field details as shown below:

- Deploy the Data Stream

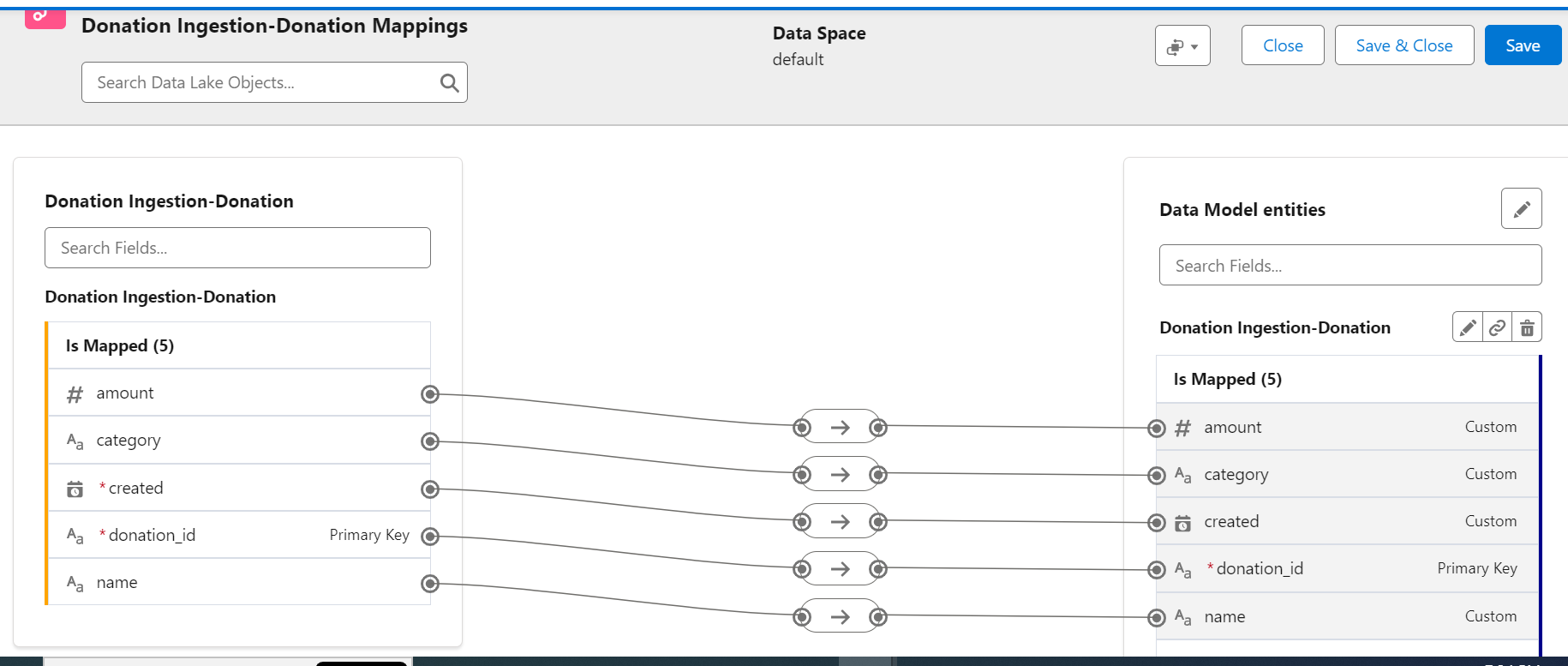

- Start Data Mapping and click on Select Objects

- Select the Custom Data Model Tab and click on the new Custom Object

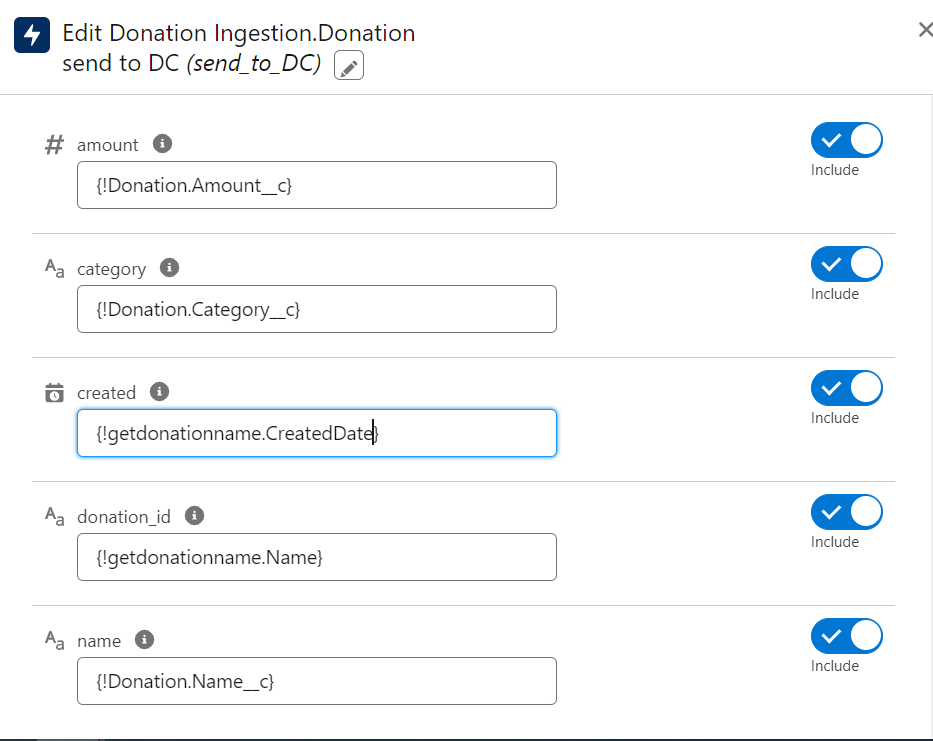

- Modify flow to retrieve new Donation record name and created date

- Add a new Action in the screen flow and select "Send to Data Cloud" from the category

- Newly created Ingestion API - Donation Ingestion, will be available by default

- Do all the input variable mapping

- Open the screen flow and enter the details

- Record created successfully

- In Data Cloud App, Go to Data Explorer Tab and check Data Lake Object - Donation. We can see that the new record created in Salesforce got synched with Data Cloud now:

February 06, 2024 at 07:03PM

Click here for more details...

=============================

The original post is available in Meera R Nair - Salesforce Insights by Meera R Nair

this post has been published as it is through automation. Automation script brings all the top bloggers post under a single umbrella.

The purpose of this blog, Follow the top Salesforce bloggers and collect all blogs in a single place through automation.

============================

Post a Comment